In my last post on the “you build it, you run it” principles behind DevOps I explained how “packing your own parachute” can give more of an incentive for developers to care about what happens when their handiwork reach production if they have to deal with the fallout. But this is not enough to guarantee sufficient quality in a modern software development environment.

For the developers out there, think about your current software development process. Chances are if you’re doing this as a career you’re not locked in the basement by yourself reinventing logging (or maybe you are).

More than likely you’re working as part of a team on some unique business-specific configuration of standard patterns. Maybe you peer program line-by-line or you share efforts on the same classes. Perhaps you just share responsibility with others for common services, or at least you’re working together with others as parts of a common functional ecosystem or platform.

Even most open-source projects of any note are not the result of one main protagonist but the result of carefully coordinated collaboration, with a few notable exceptions.

So then, if we build it as a team, we run it as a team. Fair enough, right? But how well does this correspond to real practice?

Most teams I’ve been in, or observed, have either had a designated support contact for a functional group (often a luckless manager or senior technical lead) or the dreaded rota where the parcel is passed around and everyone hopes the music doesn’t stop while they’re holding it.

Even in the latter case, the rota often just acts as a reverse proxy into the manager or technical lead. This is because the complexity of software systems these days often means a vast amount of knowledge is needed to correctly troubleshoot problems and narrow down the likely source of grief.

In other words, we may have packed that parachute as a team, but it’s only on the back of one person out the plane at any one time.

Perhaps then a better aphorism for how DevOps works in practice might be

[pullquote align=”full” cite=”” link=”” color=”” class=”” size=””]”you break it, you own it”[/pullquote](a slight variation on what those in the US may know as the Pottery Barn rule: “you break it, you buy it”)

Why “you break it, you own it” is an effective team strategy

It’s 3am Saturday and the developer on support duty is brutally awoken by that foreign phone number that only ever means Operations has a problem. Dragged into a conference call with panicking Ops members on the other side of the world giving erudite problem statements like “it’s not working” and “application is crashed”.

It’s a critical production problem; the EOD batch will not progress passed a certain point. The support manager in the forward time-zone joins the call to demand restatement of the last five minutes of conversation, despite having no ability to make a difference. Finally, after wading through myriad security systems just to get access to the support logs, the developer sees some peculiarities coming from a particular package. And that package has a time-stamp from last night…

They say that no light can escape a black hole. But I don’t think anything is as dark as the face of a developer when he views the change-log to find the new Big Brand consultant has pushed an infinite loop with a FIXME comment to do the needful.

How Modern Corporate Economics Can Break the DevOps Dream

In this era (error?) of outsourcing development work in the enterprise often filters up to the least capable person of making the changes that fulfil the minimum functional need.

Conversely, when the muck hits the fan, emergency production support tends to filter down to the most capable person who can solve the problem at hand.

The reality in many teams is that a few of the more capable people (or the ones who have been “rewarded” with a Blackberry) end up taking on the majority of the production issues. Yet they won’t always have an input into the build process that can cause the issues, in part because they become so involved in fire-fighting the problems reaching production and many corporate environments “asset strap” under-skilled developers to these stars.

In theory as much time will be spent in prevention as cure. The reality however is the asymmetry in importance between production and development often means time can’t be spent trying to find down the right developer when everyone knows that Bob will get it solved more quickly.

“Let’s not worry about who is the blame. Just get it working, Bob…now!” says Mr. CIO (and “Tell me who made the change” the email the next day will read).

Why “You build it, you run it” often isn’t enough

The theory behind “You build it, you run it” I do subscribe to, but how well does it play out in practice? A young startup can get away with it, but for those of you in large enterprises, especially those in heavily regulated industries like the financial sector, it’s increasingly not an option.

Segregation of duties is one of the phrases engraved on every corporate heart following the aftershocks of Enron and resulting legislation like the Sarbanes-Oxley Act and others of its nature. So despite the premise, and promise, of DevOps, there will often be an unbridgeable gap between development and operations in large organisations.

What can we do about it?

Continuous Testing and The Pottery Barn Rule

“You build it, you run it” implies that the person making the change will run the application in production and therefore be the first-response team when something goes wrong. The theory being if you have to make it behave as required in production, you’ll do more to get it right when the code makes the leap from development. As I’ve mentioned there are a number of things blocking this in practice.

As an alternative mantra, “You break it, you own it” need not imply production operation at all. However it does require systems in place to identify, precisely, and at the earliest opportunity, who has broken any functionality many steps before it could ever get into production.

Continuous Testing is how we achieve this.

Continuous Testing means that we are constantly testing every change made, automatically and, ideally, without conscious effort from a developer, such that it’s immediately identifiable who broke the software and how.

To do this a new breed of cloud testing tools is required to cater for changes in where code is stored (GitHub, BitBucket, etc.), how it is built (CloudBees Jenkins, Circle CI, etc.) and both the ephemeral nature and size of consumers for services in the cloud.

In the old world of testing you may have had some basic unit tests that check a setter indeed sets a variable because you wanted to improve the code coverage percentage figure of your project because that’s what your manager checks. These are nothing but vanity statistics in many cases.

But what does this do to prove that when there are a thousand users of your e-commerce site that it congestion doesn’t time-out users on the Purchase Now button? That the links you have to your blog don’t give 404s? That a network partition won’t cause faults in the distributed cluster master election coordination?

It won’t. It can’t. Most evolutionary iterations towards continuous integration systems simply won’t be setup to catch all of these problems. It needs a rethink of where testing sits in the hierarchy of development.

Mo’ Nodes, Mo’ Problems

In the traditional closed-ecosystem of the enterprise, life was much simpler. Mock a few endpoints in testing to cater for limited externalities on high-availability (and high-cost) internal infrastructure, and test the UI on the standard corporate desktop configurations. Job done.

External system X fails to give the service? They get the blame in the global outage mails.

User Y can’t use the application on Chrome instead of standard issue Internet Explorer 8? Refer them to Corporate Policy 473.12 and send them back to IE hell.

With the proliferation of Software-as-a-Service, mobile apps, service integrations and cloud hosting (with the increased risk of network partitions and failure rates of commodity hardware) there is an ever-increasing demand for testing to accurately reflect the expected use in production.

And it needs to do so automatically, and without intervention. Distributed computing is complex, and requires the developers to have a deep understanding not just of what their application needs to do, but how every technology used will react in a given situation.

Continuous Testing should mean all Testing is First-Class

Continuous Testing systems should recognise that all testing deserves to be first-class. Distributed systems in the cloud, in particular, are more susceptible to the butterfly effect from changes than what much of the corporate workforce is used to and the range of possible problems grows by year.

At TestZoo we’ve built a service that aids continuous testing to cope with this new norm of massive-volumes, distributed and unreliable networks, geographically dispersed teams and clients, non-standardised devices, incompetent users, harmful agents, multitudinous attack vectors, complex webs of external service dependencies, and increasingly rapid delivery requirements.

Distributed web load testing is only just the beginning for us, and you can expect to see fast expansion in what we offer to cope with these different dimensions.

For anyone that has ever felt that flood of adrenaline when a potentially career-ending bug in someone else’s code has been caught, by chance, moments before release into production, the value of having an always-available continuous testing service is clear.

No one likes a tattletale, we know, but identifying problems and those that cause them before the pain is shared amongst others is important.

What are your war stories? I know I’m not the only one to have “how could you possibly think that was a good idea!?” moments when debugging production logs at 3am…

IE Cat by Noah Sussman

Break Dancing by Padmanaba01

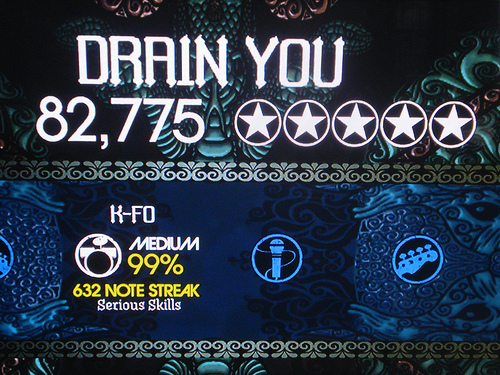

Drain You by robinmcnicoll